Is AI Commoditizing Coding — Or Quietly Widening the Gap?

Over the past year, AI tools like GitHub Copilot, Cursor, and ChatGPT have become increasingly adept at generating code. The promise is bold: AI will commoditize software development, making it possible for anyone to build apps, websites, and entire systems, regardless of technical background.

It’s a compelling narrative. But is it true?

After observing trends, conversations, and hands-on experiences across developer communities, I’ve started seeing a more nuanced picture emerge. Rather than flattening the playing field, AI may be doing the opposite, amplifying existing disparities between those who deeply understand programming and those who don’t.

This post explores that divide. We'll dive into:

The fundamental advantages experienced developers gain from AI

Why beginners are especially vulnerable to overconfidence

The historical context of commoditizing technologies

Real-world consequences in teams, hiring, and startups

What responsible adoption should look like

A vision for bridging, not widening, the gap

Let’s begin with the heart of the divide.

1. The Experienced Developer’s Edge

For seasoned programmers, tools like Copilot are like a second brain. A good developer already knows how to:

Break down a problem into logical steps

Choose the right data structures and algorithms

Write readable, testable, modular code

Understand edge cases and performance tradeoffs

Ask good questions and debug effectively

When AI steps in, it doesn't replace these skills, it accelerates them. A senior engineer can:

Prompt more precisely because they know the outcome they want

Spot flawed AI output in seconds, not minutes

Use AI to generate scaffolding and boilerplate quickly, then reshape it to fit real constraints

Pair AI suggestions with design thinking, performance considerations, and systems knowledge

This creates a multiplier effect. With the same hours in a day, an experienced developer becomes 2x to 10x more productive, not because the AI is ‘smart’ but because the human knows how to wield it skillfully.

This is no different from what happened with calculators in math or Photoshop in design. Tools amplify mastery, not replace it.

2. The Beginner’s Dilemma: Illusions of Competence

Now consider a beginner.

They’ve watched a few tutorials. They know basic syntax. They open up Cursor or ask ChatGPT to “build a to-do app in React.” To their surprise, it works.

This experience creates a powerful illusion: "I know how to code."

But under the hood, they may not understand:

Why a particular function has certain arguments

How component lifecycles work

What async/await really implies in a full-stack setup

How to fix things when the AI generates buggy code

In other words, they might be copy-pasting without comprehension.

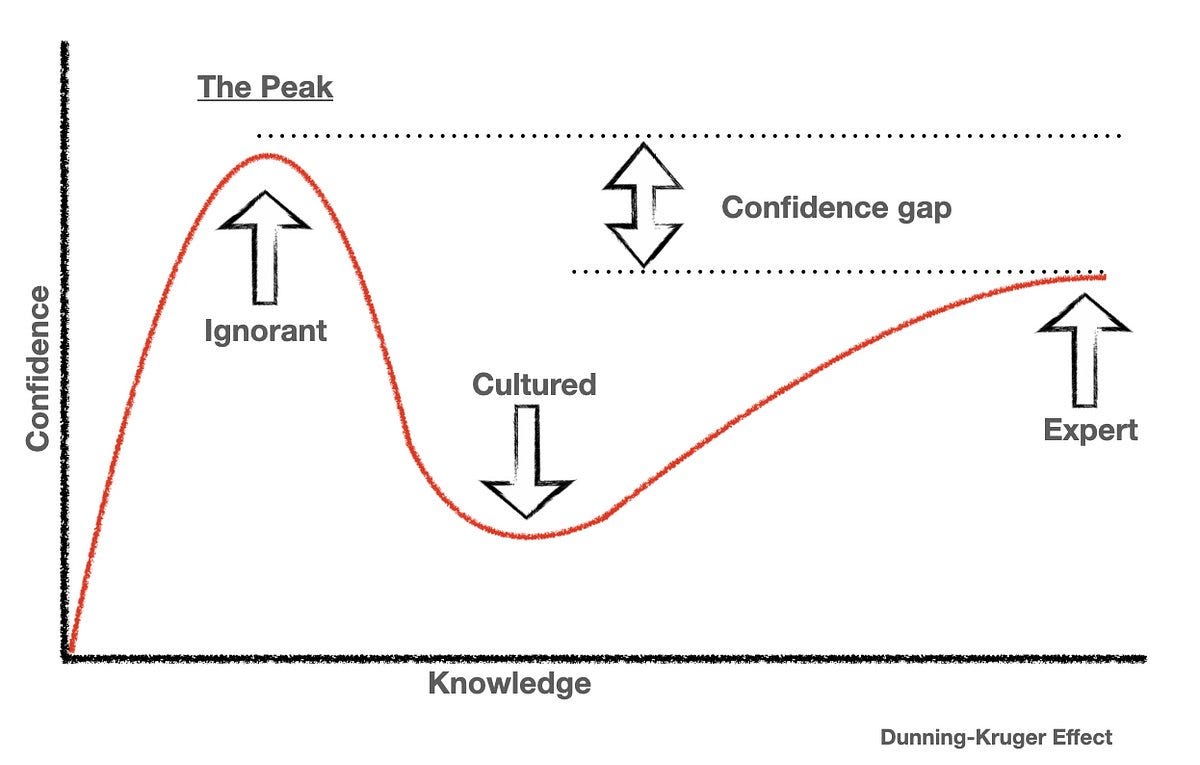

This is a textbook case of the Dunning-Kruger effect, a psychological phenomenon where people with low ability overestimate their competence. The danger isn't just producing flawed code, it's that beginners may not even realize it’s flawed.

They may build prototypes that work under ideal conditions but collapse when faced with real-world scale, security, or complexity. Worse, when things break, they may not know how to debug, because they never really understood how the system fit together in the first place.

AI hasn’t made them developers. It’s made them dependent.

3. This Isn't the First Time: Lessons from Tech History

This dynamic isn’t new. Throughout history, technological advances have promised to "commoditize" complex domains:

Word processors commoditized writing, but the best authors still understood narrative, tone, and structure.

Point-and-shoot cameras commoditized photography, but experienced photographers still mastered light, framing, and story.

Wix and Webflow commoditized web design, but UX experts still brought depth in usability, accessibility, and systems thinking.

In each case, technology lowered the barrier to starting, not to mastering.

The pattern holds for coding. AI lowers the cost of experimenting, prototyping, and building something simple. That’s wonderful. But the hard parts — logic, architecture, scalability, readability, long-term maintainability — remain.

In short: access isn’t mastery.

4. Real-World Implications: The Risk of Unchecked Enthusiasm

If this trend goes unexamined, it could lead to several troubling outcomes:

4.1 Teams Built on Fragile Foundations

Startups might hire engineers who’ve only ever coded with AI. These hires may be productive initially, fast iteration, lots of shipped features. But under pressure, they may struggle to:

Handle technical debt

Debug obscure edge cases

Write performant backend queries

Secure APIs properly

Collaborate effectively in large codebases

As complexity rises, cracks appear. Fragile foundations lead to missed deadlines, security incidents, and rewrites that cost millions.

4.2 A New Type of Technical Debt

AI-generated code can be clean on the surface but brittle underneath. It may lack:

Proper error handling

Test coverage

Edge case analysis

Long-term modularity

This is a new kind of technical debt — complacency-driven debt. It’s silent and invisible, until the system collapses under scale or ambiguity.

4.3 Hiring Noise and Credential Inflation

If everyone can generate a portfolio with AI-generated apps, how do you distinguish real engineering talent?

We may soon face a wave of credential inflation, where bootcamps, certificates, and portfolios become less reliable as signals of capability. Interviews will have to go deeper. Companies may need to re-prioritize on-the-spot problem-solving, system design, and debugging under pressure.

5. But AI Can Be a Commoditizing Force — If Used Well

None of this means AI is bad for beginners. Far from it. In fact, it could be the best learning companion in history, if used with awareness and structure.

Let’s explore how.

5.1 Learning by Doing (With Guardrails)

AI can be a great teaching tool if the learner adopts the right mindset. For example:

Prompt: "Write a Python function to check if a number is prime."

Follow-up: "Explain what the code does line by line."

Next step: "What’s the time complexity? How can it be optimized?"

This approach turns AI into a Socratic tutor. You don’t just consume output, you interrogate it. You deconstruct it. You compare multiple implementations.

In this way, AI becomes an interactive textbook, one that adapts in real-time, never tires, and always responds.

5.2 Closing Feedback Loops Faster

For beginners, writing code and getting instant output accelerates the feedback loop. You don’t have to wait for a mentor or post in forums. You try, fail, iterate. This is invaluable for motivation and momentum, two things that kill most learners early.

5.3 Exposure to Diverse Styles

By seeing how AI writes code in different paradigms (functional, object-oriented, procedural), learners get exposure they might not otherwise seek. This expands their mental models.

But again: this only works if they’re actively engaging, not blindly accepting.

6. The Path Forward: 5 Principles for Responsible AI-Coding Adoption

Here’s what I believe responsible developers, teams, and educators should consider:

1. Teach Prompting as a Literacy Skill

Prompt engineering should be taught alongside basic programming. Knowing how to ask AI for help, and knowing when not to, is a foundational skill now.

2. Separate Output from Understanding

Just because code “works” doesn’t mean it’s right. Always inspect, reason, and ask: Why does this work? What would break it? What assumptions am I making?

3. Keep Debugging and Reading as Core Skills

AI can write code but debugging and reading large codebases are still human strengths. Prioritize these skills even more in an AI-native world.

4. Value Systems Thinking Over Syntax Memorization

Syntax is less important now. Instead, focus on architecture, modeling, and communication. These are harder to automate and far more valuable in teams.

5. Mentorship > Automation

No AI can replace the wisdom of a good mentor. Experienced developers should actively help juniors understand, not just produce.

7. Final Thoughts: The Irony of Intelligence

AI is not replacing programmers. It’s exposing them.

It reveals who truly understands code and who’s been coasting. It rewards curiosity, systems thinking, and craftsmanship, not copy-paste hustle.

In that sense, AI isn’t just a productivity tool. It’s a mirror.

So, is AI commoditizing coding?

Yes, it’s giving more people access.

But whether it truly empowers them depends on what they do with that access.

Mastery still matters. Maybe now more than ever.

Have you noticed this divide in your own team or learning journey?

How are you or your organization adapting to the rise of AI-assisted development?

What practices have helped you avoid the illusion of mastery?

Drop your thoughts in the comments, I’d love to learn from your perspective.